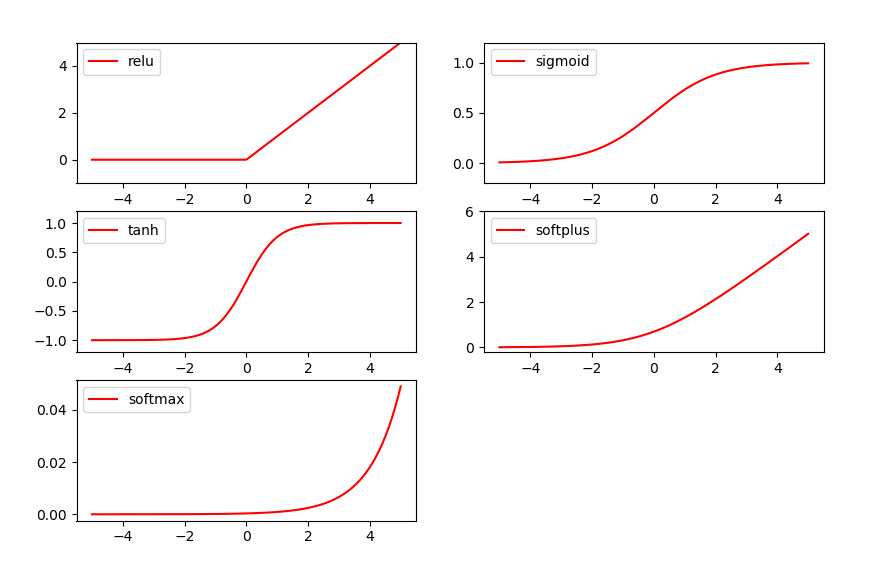

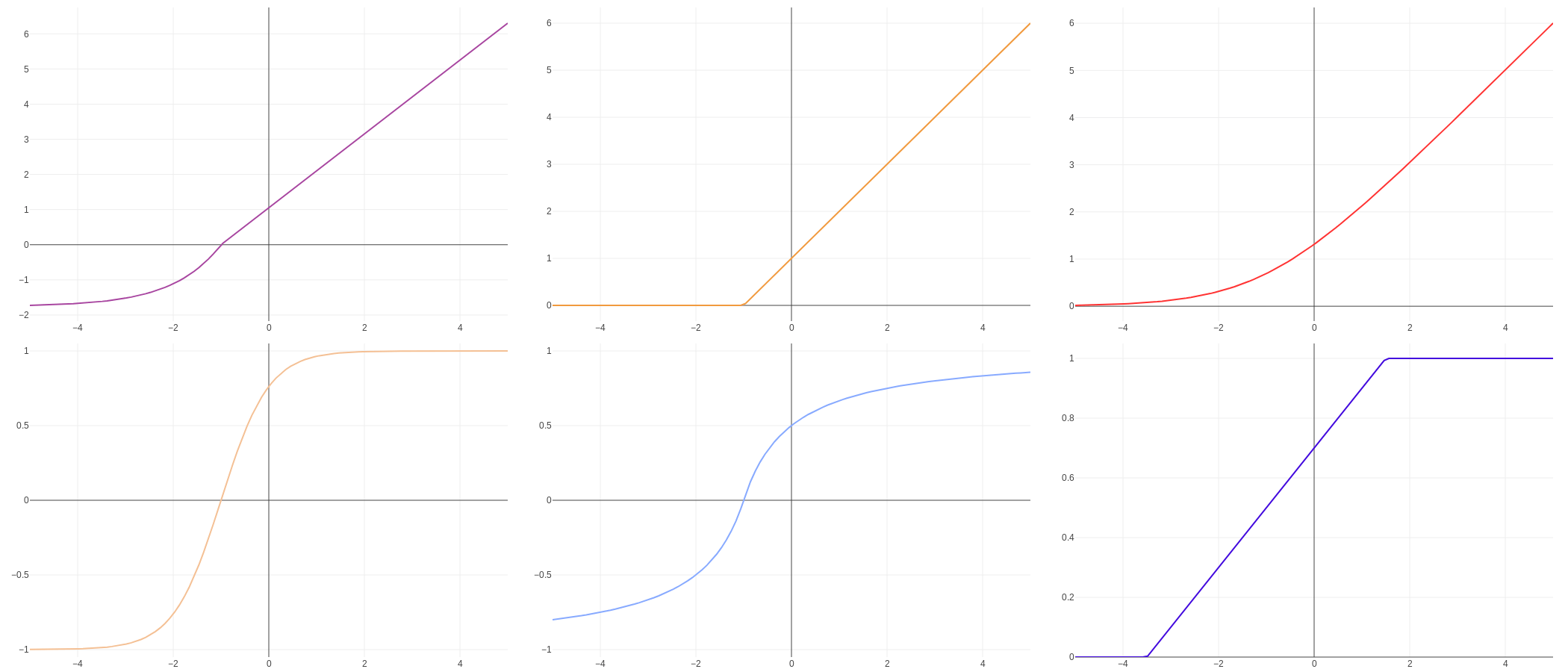

How can I reshuffle it manually? Any help would be deeply appreciated. My question is why does UFF converter insert a shuffle layer into the node to convert it to NHWC format. This shuffling is not needed for the depthwise conv layer which uses swish activation as input and hence the above error is generated. When it is passed to the next layer, which is Reduce mean layer, TensorRT shuffles the node to reshape it to NHWC format (112x112x32) It can be shown that Swish and GELU both are a smooth approximation of ReLU. GELU 8 is an another popular smooth activation function. The input and output of the swish layer are of shape 32 x 112 x112. Swish 7 is a non-linear activation function proposed by the Google brain team, and it shows some good improvement of ReLU.

While trying to parse the above-converted uff file, I am coming across the following error : ERROR: multiply_1/mul: elementwise inputs must have same dimensions or follow broadcast rules (input dimensions were and )ĮRROR: conv2d_4/convolution: at least three non-batch dimensions are required for inputĮRROR: UFFParser: Parser error: batch_normalization_3/FusedBatchNorm_1: The input to the Scale Layer is required to have a minimum of 3 dimensions. #dynamic_graph.remove(dynamic_aph_outputs, remove_exclusive_dependencies=False) #dynamic_graph.remove(dynamic_graph.find_nodes_by_path(namespace_remove), remove_exclusive_dependencies=True)ĭynamic_llapse_namespaces(namespace_plugin_map) Import graphsurgeon as gs import tensorflow as tf Now, open up modelftswish. n Matplotlib and Numpy, to support our model in terms of visualization and number processing. Kindly note that the network only has a single unsupported node which is swish activation ( API - Activations - TensorLayer 2.2.4 documentation). Tensorflow, which is now the preferred backend for Keras. A custom config.py was used in the conversion process. The model was initially trained on keras and was converted to UFF format using uff converter in python. We will then go through the results when Swish is applied to several NLP tasks, along with the PyTorch code to train your own deep neural networks with Swish.I am trying to implement a plugin layer for swish activation function in TensorRT. It is a relatively simple function: it is the multiplication of the input x with the sigmoid function for x - and it looks as. Le from Google Brain proposed the Swish activation function. In October 2017, Prajit Ramachandran, Barret Zoph and Quoc V. We will first take a look at the motivation behind the paper, followed by a dissection of the structure of Swish and its similarities to SILU (Sigmoid Weighted Linear Unit). Nevertheless, it does not mean that it cannot be improved. The paper proposes a novel activation function called Swish, which was discovered using a Neural Architecture Search (NAS) approach and showed significant improvement in performance compared to standard activation functions like ReLU or Leaky ReLU. In this article we'll explore the 2018 paper by Google Brain titled " Searching for activation functions". Activation functions not only help with training by introducing non-linearity, but they also help with network optimization. They prioritize commercial interests over intellectual ones.Ĭhatrooms Official Discord Server Wiki Getting Started with Machine Learning Resources Related Subreddits /r/MachineLearning /r/MLQuestions /r/datascience /r/computervision Machine Learning Multireddit /m/machine_learningĪctivation functions might seem to be a very small component in the grand scheme of hundreds of layers and millions of parameters in deep neural networks, yet their importance is paramount.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed